Observability

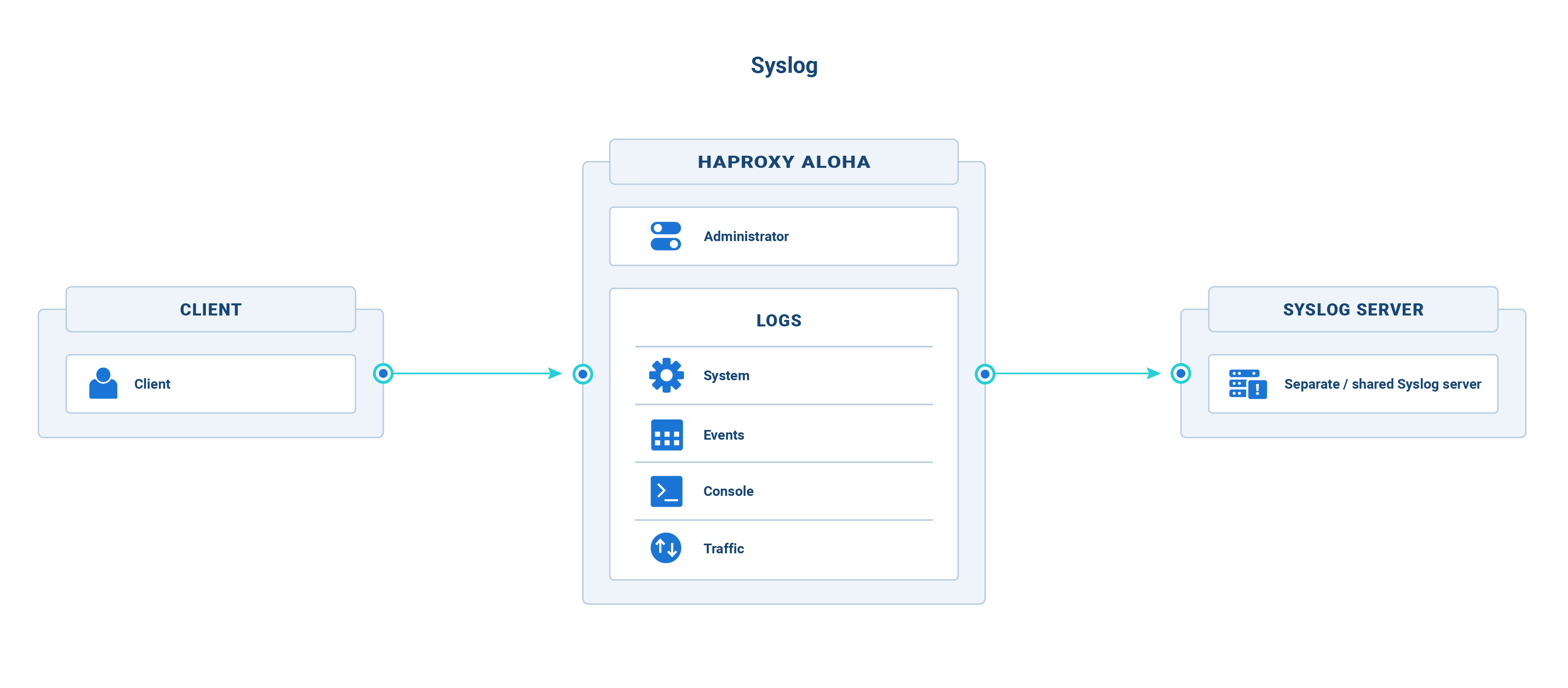

Send HAProxy ALOHA logs to an external syslog server

HAProxy ALOHA does not permanently store logs. It stores them only in memory and not on the filesystem, making them available for viewing for a limited amount of time via the Logs tab. For long-term storage of logs, deploy a remote syslog server and configure HAProxy ALOHA to ship logs to it.

HAProxy ALOHA generates several types of logs, each of which can be collected and sent to a separate or a shared syslog server. The types are defined in the table below, where each has a unique name that you will use to configure it.

| Name | Description |

|---|---|

| system | Major operating system events |

| events | Load balancer events |

| console | Administration Web UI events |

| traffic | Traffic traversing all HAProxy ALOHA frontends |

Info

- Select the Logs tab to view a limited history of in-memory logs.

- You can also log the traffic that traverses a specific HAProxy ALOHA frontend

Configure the syslog server Jump to heading

You must configure a remote syslog server to receive log entries.

-

Install a syslog server such as

rsyslog.Example:

nixsudo apt install rsyslognixsudo apt install rsyslog -

Create a file named

/etc/rsyslog.d/10-aloha.confwith the directives below.Here, we configure rsyslog to listen on all IP addresses at port 514. Store incoming log messages in the file

/var/log/aloha.logwhen they come from the HAProxy ALOHA IP address./etc/rsyslog.d/10-aloha.conftext$ModLoad imudp$UDPServerRun 514$ActionFileDefaultTemplate RSYSLOG_TraditionalFileFormatif $fromhost-ip=='172.16.24.237' then /var/log/aloha.log/etc/rsyslog.d/10-aloha.conftext$ModLoad imudp$UDPServerRun 514$ActionFileDefaultTemplate RSYSLOG_TraditionalFileFormatif $fromhost-ip=='172.16.24.237' then /var/log/aloha.logThe directives are as follows:

Directive Description $ModLoad imudp Receive logs over UDP. $UDPServerRun 514 Start on the specified port. $ActionFileDefaultTemplate RSYSLOG_TraditionalFileFormat Use the traditional syslog format. if $fromhost-ip==‘172.16.24.237’ then /var/log/aloha.log Store incoming log messages in the file /var/log/aloha.logwhen they come from the HAProxy ALOHA IP address. Replace172.16.24.237with your own IP address. You can specify several of these directives, or usestartswithto match a range of IPs. -

Restart the

rsyslogserver.nixsudo systemctl restart rsyslognixsudo systemctl restart rsyslog

Log operating system events Jump to heading

Configure the system log type to send major HAProxy ALOHA operating system events, such as kernel errors, to an external syslog server.

-

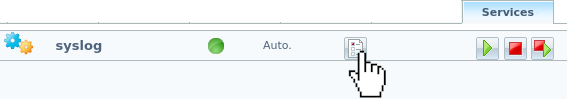

In the Services tab, click syslog setup.

-

In the

service syslog systemsection, specify the IP address and port of the destination Syslog server.Example:

Send operating system events to a syslog server listening at 172.16.24.236 on UDP port 514.

rubyservice syslog systemserver 172.16.24.236:514rubyservice syslog systemserver 172.16.24.236:514 -

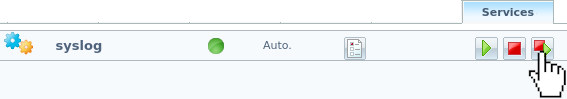

Restart the

syslogservice.

The

Message 7010: Last action returns successmessage displays.

Log load balancer events Jump to heading

Configure the events log type to send errors related to starting/stopping the load balancer, and related errors or warnings, to an external syslog server.

-

In the

service syslog eventssection, specify the IP address and port of the destination syslog server.Example:

Send load balancer errors to a syslog server listening at 172.16.24.236 on UDP port 514.

rubyservice syslog eventsserver 172.16.24.236:514rubyservice syslog eventsserver 172.16.24.236:514 -

Restart the

syslogservice.

Log administration events Jump to heading

Configure the console log type to send events such as logins to the HAProxy ALOHA command-line interface to an external syslog server.

-

In the

service syslog consolesection, specify the IP address and port of the destination syslog server.Below, we send login events to a syslog server listening at 172.16.24.236 on UDP port 514.

rubyservice syslog consoleserver 172.16.24.236:514rubyservice syslog consoleserver 172.16.24.236:514 -

Restart the

syslogservice.

Log traffic from all frontends Jump to heading

Configure the traffic log type to send traffic logs for all frontends to an external syslog server.

-

In the

service syslog trafficsection, specify the IP address and port of the destination syslog server.Below, we send traffic logs to a syslog server listening at 172.16.24.236 on UDP port 514.

rubyservice syslog trafficserver 172.16.24.236:514rubyservice syslog trafficserver 172.16.24.236:514 -

Restart the

syslogservice.

Log traffic from a specific frontend Jump to heading

You can log traffic that traverses a specific HAProxy ALOHA frontend.

-

On the remote rsyslog server, edit the file

/etc/rsyslog.d/10-aloha.conffile.Below, we capture messages from syslog facilities

local0andlocal1, and write them to thefrontend1-traffic.logandfrontend2-traffic.logfile.textlocal0.* /var/log/frontend1-traffic.loglocal1.* /var/log/frontend2-traffic.logtextlocal0.* /var/log/frontend1-traffic.loglocal1.* /var/log/frontend2-traffic.log -

On HAProxy ALOHA, add the following directive to a

frontendsection:textlog <syslog server IP address>:<port>textlog <syslog server IP address>:<port>

Below, we send log messages to facility local0 to an rsyslog server listening at 172.16.24.236 on UDP port 514.

haproxy

haproxy

Test the setup Jump to heading

-

Make a web request either to:

- the HAProxy ALOHA Web UI.

- a HAProxy ALOHA frontend.

nixcurl http://172.16.24.237:8080nixcurl http://172.16.24.237:8080 -

Inspect the logs on your rsyslog server.

nixsudo less /var/log/aloha.lognixsudo less /var/log/aloha.logoutputtextJan 13 11:12:58 ALOHA1 alohactl2[15685] ALOHA1# /opt/bin/alohactl2 -S root l7_dumpJan 13 11:12:58 ALOHA1 alohactl2[15722] ALOHA1# /opt/bin/alohactl2 -S root l4_dumpJan 13 11:13:04 ALOHA1 alohactl2[15859] ALOHA1# /opt/bin/alohactl2 -S root l7_dumpJan 13 11:52:27 172.16.24.237 haproxy[9522]: 172.29.1.90:46714 [13/Jan/2022:11:52:27.745] webservice webfarm/websrv1 0/0/0/1/1 200 818 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"outputtextJan 13 11:12:58 ALOHA1 alohactl2[15685] ALOHA1# /opt/bin/alohactl2 -S root l7_dumpJan 13 11:12:58 ALOHA1 alohactl2[15722] ALOHA1# /opt/bin/alohactl2 -S root l4_dumpJan 13 11:13:04 ALOHA1 alohactl2[15859] ALOHA1# /opt/bin/alohactl2 -S root l7_dumpJan 13 11:52:27 172.16.24.237 haproxy[9522]: 172.29.1.90:46714 [13/Jan/2022:11:52:27.745] webservice webfarm/websrv1 0/0/0/1/1 200 818 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"nixsudo less /var/log/frontend1-traffic.lognixsudo less /var/log/frontend1-traffic.logoutputtextJan 13 14:09:38 172.16.24.237 haproxy[18201]: 172.29.1.90:40710 [13/Jan/2022:14:09:38.751] webservice webfarm/websrv1 0/0/0/1/1 200 818 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"Jan 13 14:23:09 172.16.24.237 haproxy[18201]: 172.29.1.90:45748 [13/Jan/2022:14:23:09.407] webservice webfarm/websrv1 0/0/0/1/1 404 304 - - --NI 1/1/0/0/0 0/0 "GET /8080 HTTP/1.1"Jan 13 14:23:50 172.16.24.237 haproxy[18201]: Proxy webservice stopped (cumulated conns: FE: 2, BE: 0).Jan 13 14:25:21 172.16.24.237 haproxy[19247]: 172.29.1.90:37120 [13/Jan/2022:14:25:21.318] webservice webfarm/websrv1 0/0/0/0/0 200 602 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"Jan 13 14:25:21 172.16.24.237 haproxy[19247]: 172.29.1.90:37120 [13/Jan/2022:14:25:21.548] webservice webfarm/websrv1 0/0/0/0/0 404 351 - - --VN 1/1/0/0/0 0/0 "GET /favicon.ico HTTP/1.1"Jan 13 14:25:37 172.16.24.237 haproxy[19247]: 172.29.1.90:37224 [13/Jan/2022:14:25:37.052] webservice webfarm/websrv1 0/0/0/0/0 200 818 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"outputtextJan 13 14:09:38 172.16.24.237 haproxy[18201]: 172.29.1.90:40710 [13/Jan/2022:14:09:38.751] webservice webfarm/websrv1 0/0/0/1/1 200 818 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"Jan 13 14:23:09 172.16.24.237 haproxy[18201]: 172.29.1.90:45748 [13/Jan/2022:14:23:09.407] webservice webfarm/websrv1 0/0/0/1/1 404 304 - - --NI 1/1/0/0/0 0/0 "GET /8080 HTTP/1.1"Jan 13 14:23:50 172.16.24.237 haproxy[18201]: Proxy webservice stopped (cumulated conns: FE: 2, BE: 0).Jan 13 14:25:21 172.16.24.237 haproxy[19247]: 172.29.1.90:37120 [13/Jan/2022:14:25:21.318] webservice webfarm/websrv1 0/0/0/0/0 200 602 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"Jan 13 14:25:21 172.16.24.237 haproxy[19247]: 172.29.1.90:37120 [13/Jan/2022:14:25:21.548] webservice webfarm/websrv1 0/0/0/0/0 404 351 - - --VN 1/1/0/0/0 0/0 "GET /favicon.ico HTTP/1.1"Jan 13 14:25:37 172.16.24.237 haproxy[19247]: 172.29.1.90:37224 [13/Jan/2022:14:25:37.052] webservice webfarm/websrv1 0/0/0/0/0 200 818 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"

Syslog service reference Jump to heading

The syslog service in the Services tab supports the following configuration directives:

| Directive | Description |

|---|---|

console_level <level> |

Sets the maximum syslog severity level to send to the console. |

forward_timestamp (version 13.5 and 14.0) |

Adds an RFC 3164 header to log messages if it is missing (which includes a timestamp and hostname). The log’s raw message changes from: <134>haproxy[26940]: Connect from 192.168.68.117:60749 to 192.168.68.124:80 (web/TCP)to: <134>Jan 24 16:49:40 ALOHA1 haproxy[26940]: Connect from 192.168.68.117:60749 to 192.168.68.124:80 (web/TCP) |

keyid <key> |

An identier to use for a second syslog server. |

listen <local IP[:port]> |

Collect UDP log messages from the given local IP address and optional port. |

listen_kernel |

Collect kernel messages. |

[no] listen_unix |

Collect (or do not collect if prefixed with no) messages from /dev/log. |

output "buffer"|<filename> |

Records log messages to either a ring buffer or to a file. |

rotate <number> |

The number of log files to keep before rotating them. |

server <remote IP:port> |

The IP address and port of a remote syslog server that will receive log messages. |

size <kb> |

The maximum size in kilobytes of the buffer or file when output "buffer" or output <filename> is set. |

Do you have any suggestions on how we can improve the content of this page?